ai

5 Levels of AI Proficiency and Collaboration

What Explains All the Waste and Variation?

Why do two people use the same AI model and get completely different results? One produces clarity and insight; the other, noise and frustration. Why do some think technology is coming to take all our jobs while others think it is garbage?

After two years of researching and practicing building various web app tools with large language models (LLMs), I began to see the answer wasn’t just in the technology — or even in the user’s mindset. It was deeper than that. Some tasks lend themselves to fuller automation, but most can only be meaningfully augmented. The difference depends on how people and AI models collaborate with this new technology.

Over time, I started to see a few clear patterns in that collaboration — what I now call the five levels of AI proficiency. These levels represent how individuals and organizations engage with the tools, from casual experimentation to structured integration. Roughly 95% of users remain at what I call Level 1 and Level 2 — typing casual questions into a chat box and getting varied results. A much smaller group, operating at Levels 3 and 4 and 5, achieves consistent, high-quality outcomes by treating AI as a process to be improved, not a magic box to be queried.

As a lean thinker, this variation felt familiar. Two operators can run the same equipment — one produces quality parts, the other defects. The problem isn’t just the machine. It’s the materials, the tools, the methods, and the entire process. The same is true with AI. Tools alone don’t guarantee good results; capability, standards, and feedback loops matter just as much.

The Variation Problem: A Lean Thinker’s Puzzle

When I first began experimenting with LLMs my results were somewhat inconsistent. Some expert practitioners I observed were generating genuinely insightful analyses, while others such as myself — often using the same model, producing what we’d call in lean terms “waste.”

Same technology. Significantly different outcomes. So, I decided to invest more time in learning about the LLMs and how they operate. In lean, we don’t just blame the equipment or the operator. We investigate the “Why.” We study the process, the training, and the method. Sort of like “going to gemba” except this was all digital learning. I dove into learning with books, papers, free courses from Stanford and Harvard, online communities, and endless experiments. And over time I started to see some patterns

The Five Levels of AI Proficiency

To help explain the situation I came up with the following framework. The intent is to outline five levels of AI proficiency and how most users interact with LLMs and what you can expect in terms of output and quality. Here is my main takeaway — the method by which you use the model dictates your outcome and your opinion on the technology.

Level 1: Raw Dialogue Box (≈95% of Users)

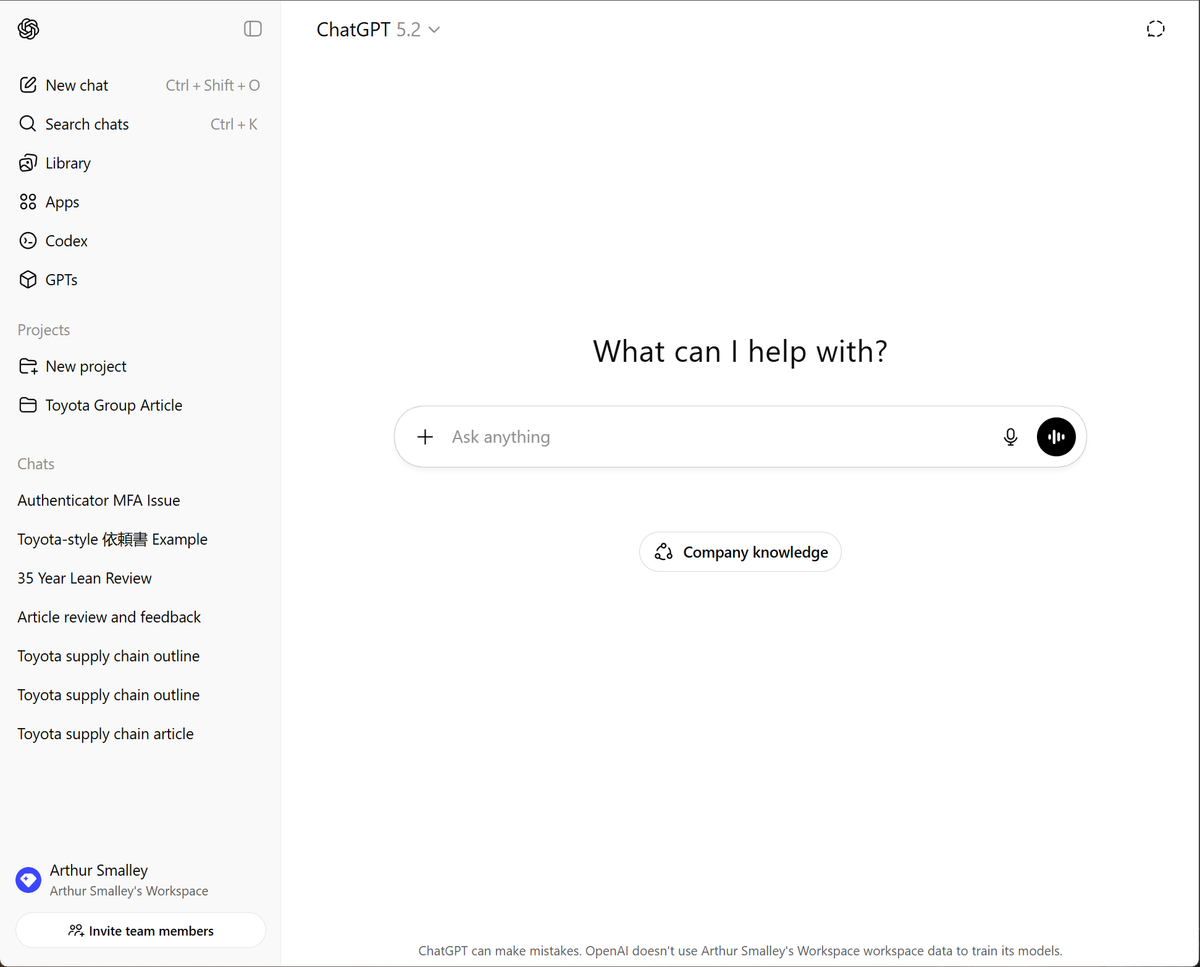

This group uses LLMs, such as OpenAI, Gemini, or Claude, by typing casual questions into ChatGPT or similar tools. The responses are sometimes helpful, often generic, occasionally hallucinated. The user has no control over the model’s behavior and is not impressed by the outcome. They might give it a 70-80% score and put it down. The user in this case naturally walks away thinking “AI is overhyped.” It’s still like using Google, Wikipedia, or Reddit. Some nuggets are in there, but you must dig for it.

Level 2: Basic Prompt Engineering

At this level, the user has discovered that the quality of the model’s output depends heavily on the precision and clarity of the input — how questions are asked and instructions are framed truly matters. There are specific terms for this called prompt engineering and context engineering. Providing necessary context, assigning roles, structuring requests, and giving examples to the model dramatically improves results. A “system prompt” might consist of two pages of text for example. Suddenly the perceived results from the model increase to the 90% acceptable range or higher. Users here start steering the model rather than being at its mercy. Many stop here — and for casual use, that’s fine.

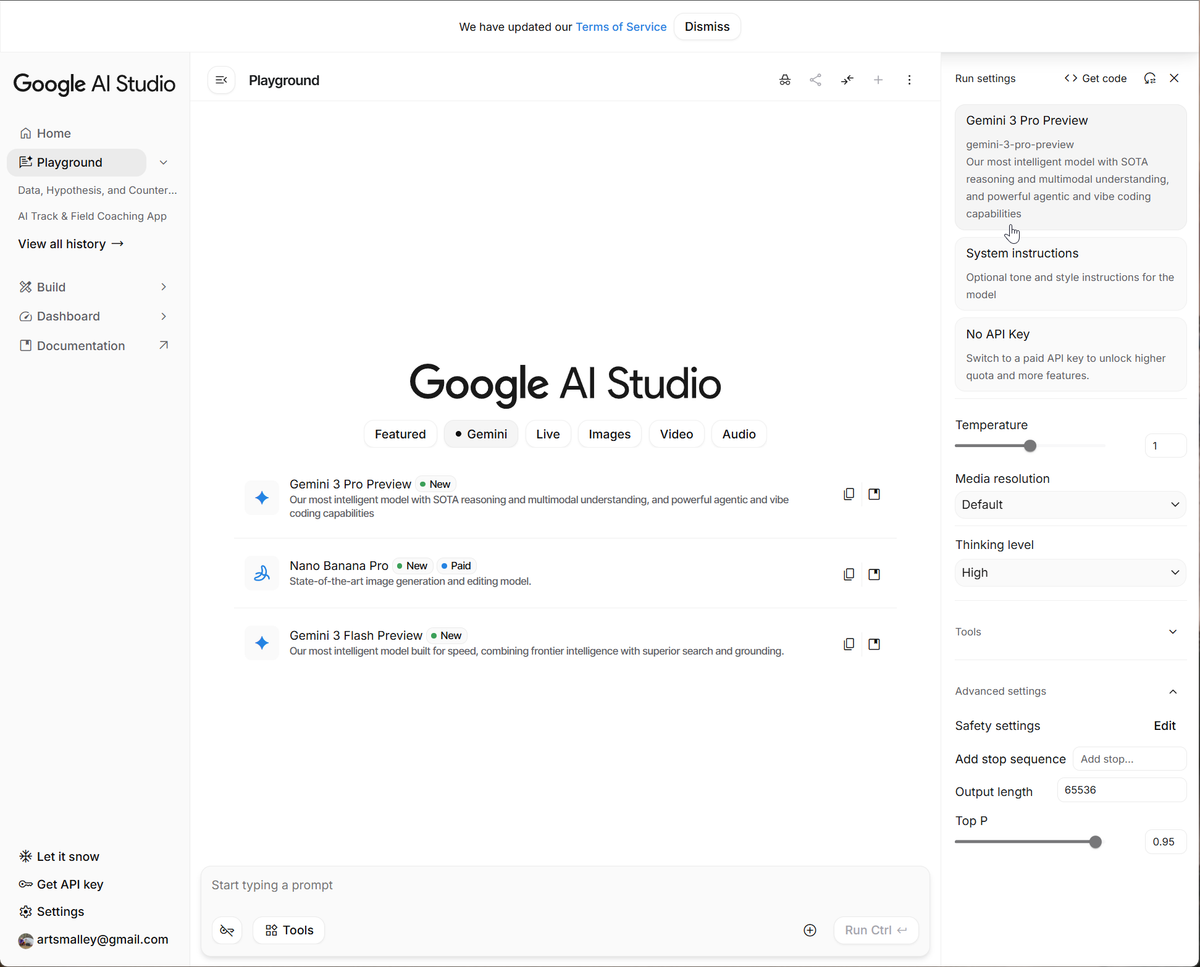

Note here that you have a lot more control of the type of model, temperature settings, thinking mode, safety settings, tools, Top P, etc. Learning these settings and uploading reference documents gives you more control over the model and the quality of output goes up as well.

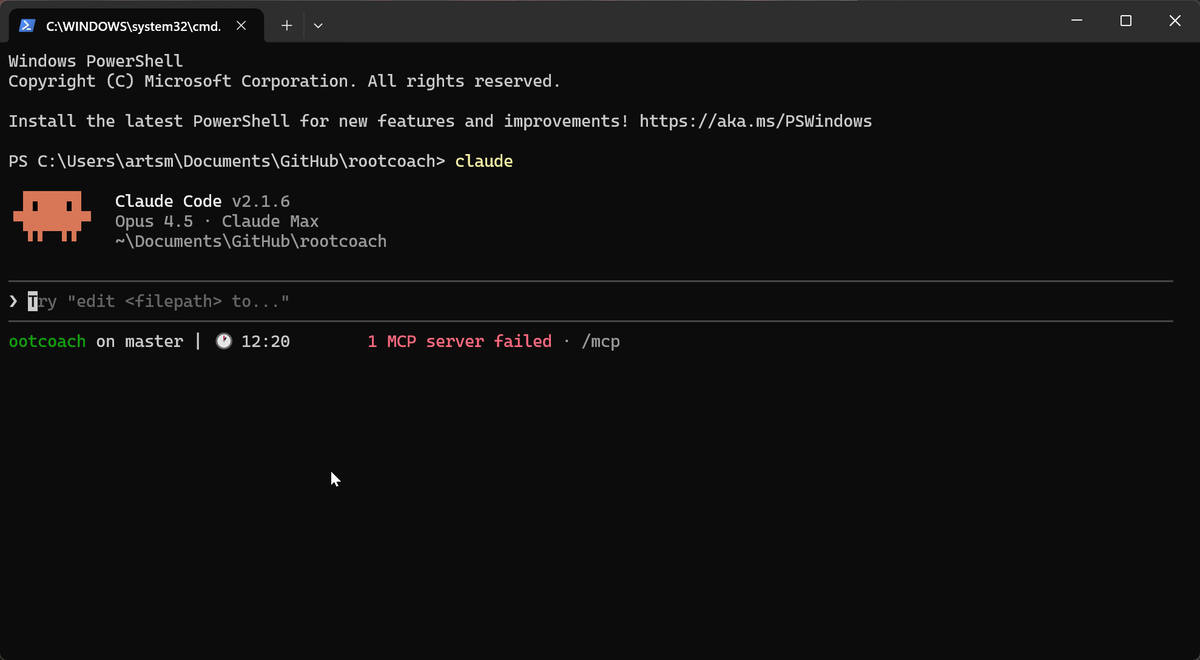

Level 3: Developer Tools & APIs

At this level the user starts to interact with the model through some basic lines of code and specific interfaces. A popular development tools is known as "Claude Code". At this stage you are leaving the chat box interface behind and going straight to the command line interface (CLI). Here you experiment in coding environments or specialized tools, adjusting model parameters for creativity, consistency, or focus and giving it your custom instructions in natural language (or in programming languages like Python, etc.) Suddenly the model responses start getting very good and often change your opinion on the whole topic of AI. In other words, you have learned how to treat the model like a controllable system, not a black box. Here you can assign work to a coding agent, build in quality checks, and create custom programs, etc. This level represents PDCA applied to AI use, and, if you experiment with the settings under the hood, you start getting responses (and custom software programs) that are higher quality, more insightful, and amazing in many cases. The problem, however, is that only a tiny portion of public have any idea how to achieve this level. Software developers use it daily. Even with a basic programming background it took me close to a year to get comfortable at this level. The learning curve is still too steep for the casual user of Level 1 or Level 2. However it is getting easier to use will only get better.

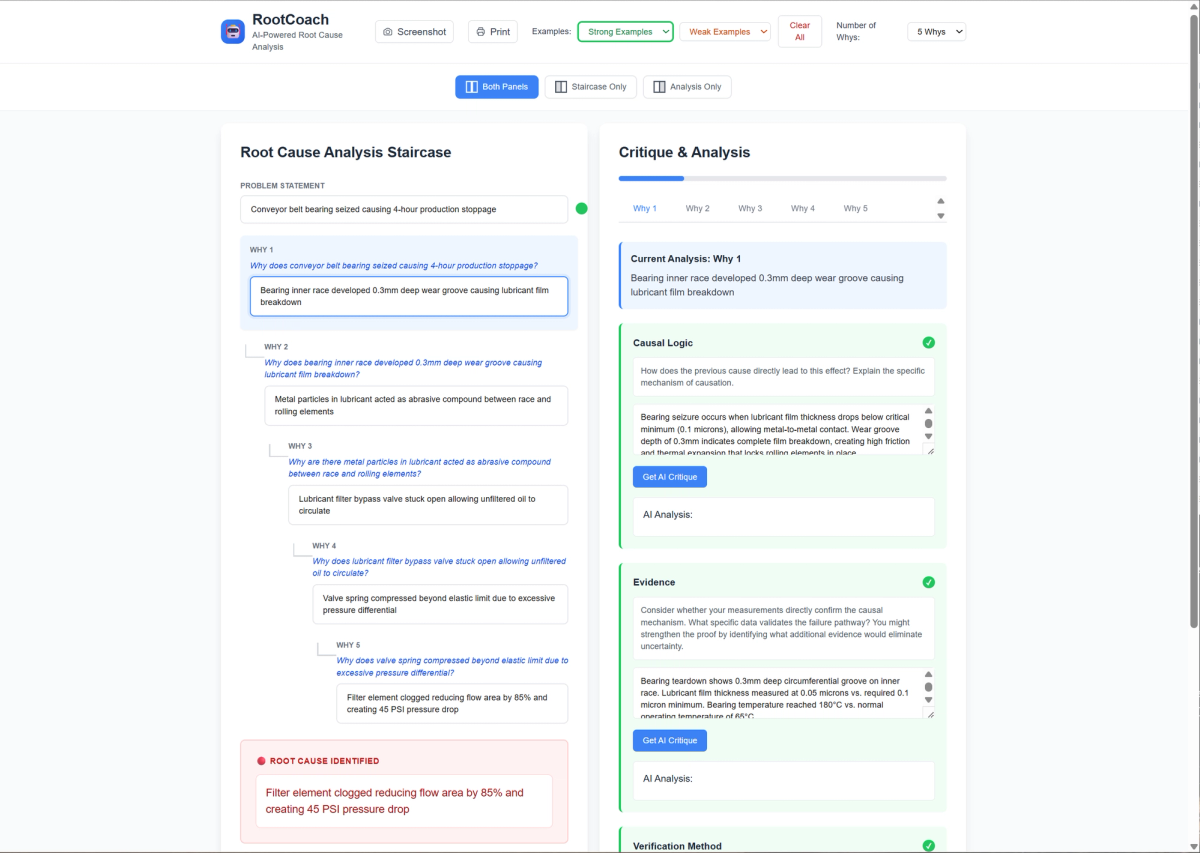

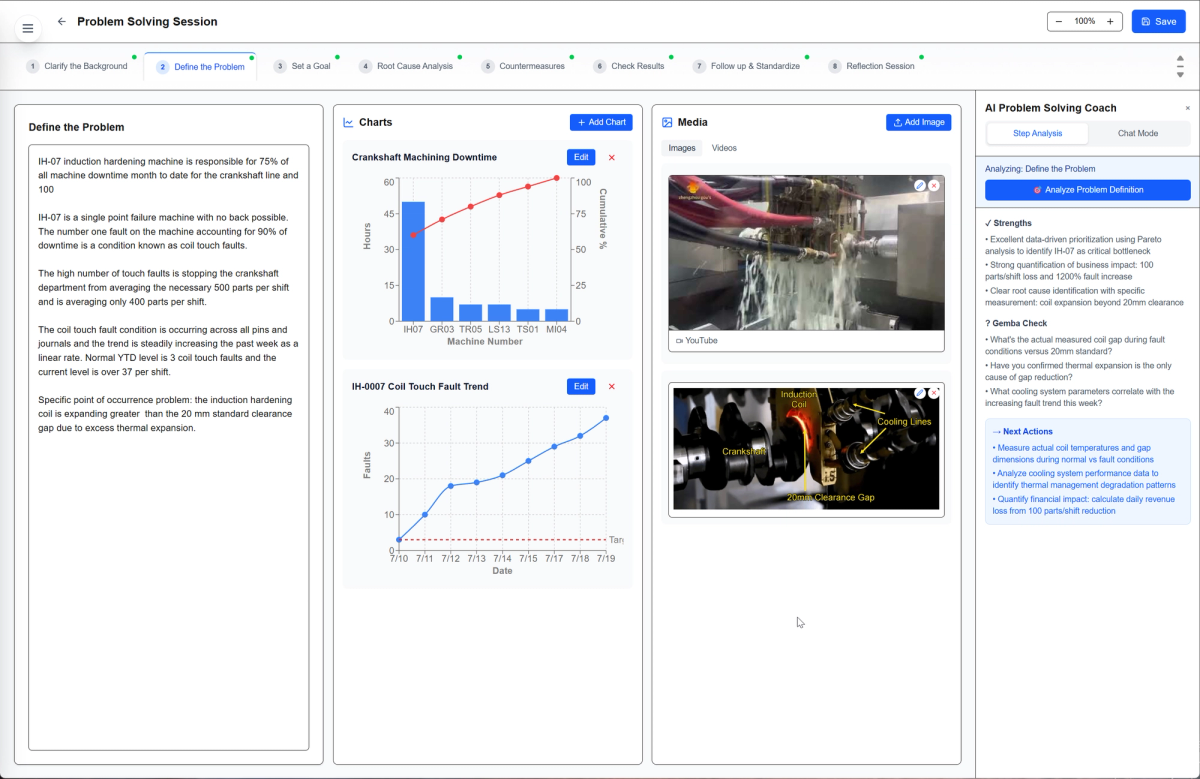

Here I was able to build something I had wanted to create for years. A lean "5 Why" tool called Root Coach. It evaluates the users 5 Why for root cause analysis with regards to causal logic, evidence, and verification method. The feedback is intended to augment our human thinking capability.

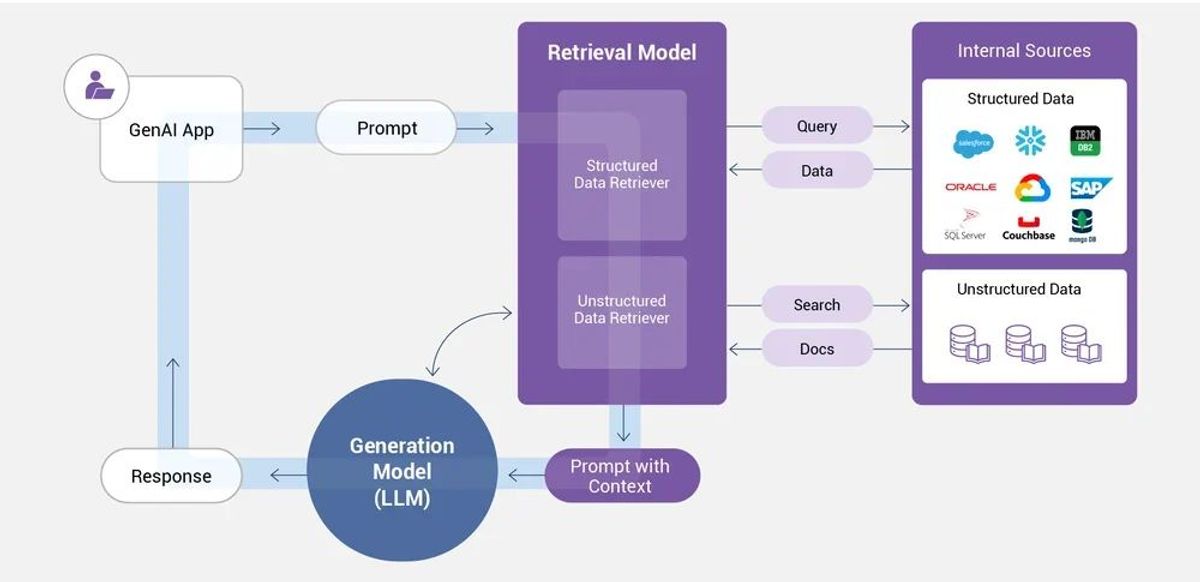

Level 4: Custom Applications with RAG Architecture and Tools

At this level, you’re building your own AI tools. You design specialized interfaces, integrate specific domain knowledge, and create retrieval-augmented generation (RAG) databases for context. Coding skills help here but often the AI can explain how to hook things up — that is how I figured out my own applications. In this realm there are very specific tools like n8n or MCP servers that can also expand what’s possible. The results in my experience are very impressive but are also very domain-specific and, hence, tough to disseminate. These applications can automatically assist with research, coding, planning, documenting, and editing, and often produce amazing results far beyond what I assumed possible. However, the caveat is that very little of the user population can interact with the models at this level. This is still largely the realm of software developers.

At this level you can build high quality software applications with user login, authentication, databases, and something called Retrieval Augmented Generation (RAG) architecture. It allows you to combine your own personal data structures with the large language models and create very custom applications with specific data sets you designate. At this level the LLM "hallucination" problems are reduced significantly. The problem of course is that takes considerable skill to build in conjunction with LLM's and brings into play data privacy and a myriad of security concerns. I built a custom problem solving coaching agent. Even with the help of AI coding tools it still took months to get finalized.

Level 5: Agentic Systems and Fine-Tuning

At the fifth level of the framework, models can be fine-tuned by end users to get more impressive results. And it is possible to chain “agents” together to complete certain tasks start to finish. Here the focus shifts to orchestration — multi-agent systems, fine-tuning, and autonomous workflows. While enterprise solutions are emerging in this area, they are extremely fragile, limited, and very task-specific.

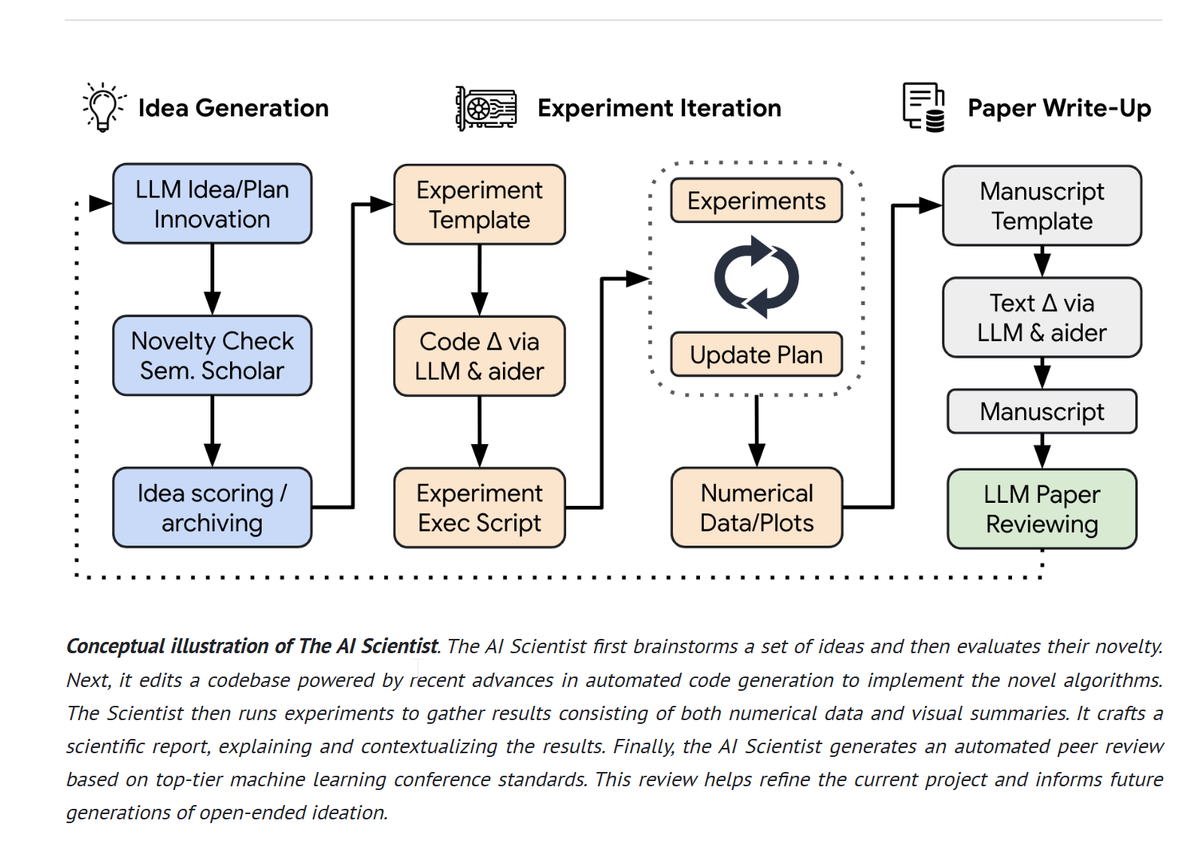

Companies like Sakana.ai in Japan have developed products capable of executing the steps of the scientific method and creating journal quality articles under experimental conditions. Other companies are making impressive products for coding, research, and other specialized purposes using both local and frontier models.

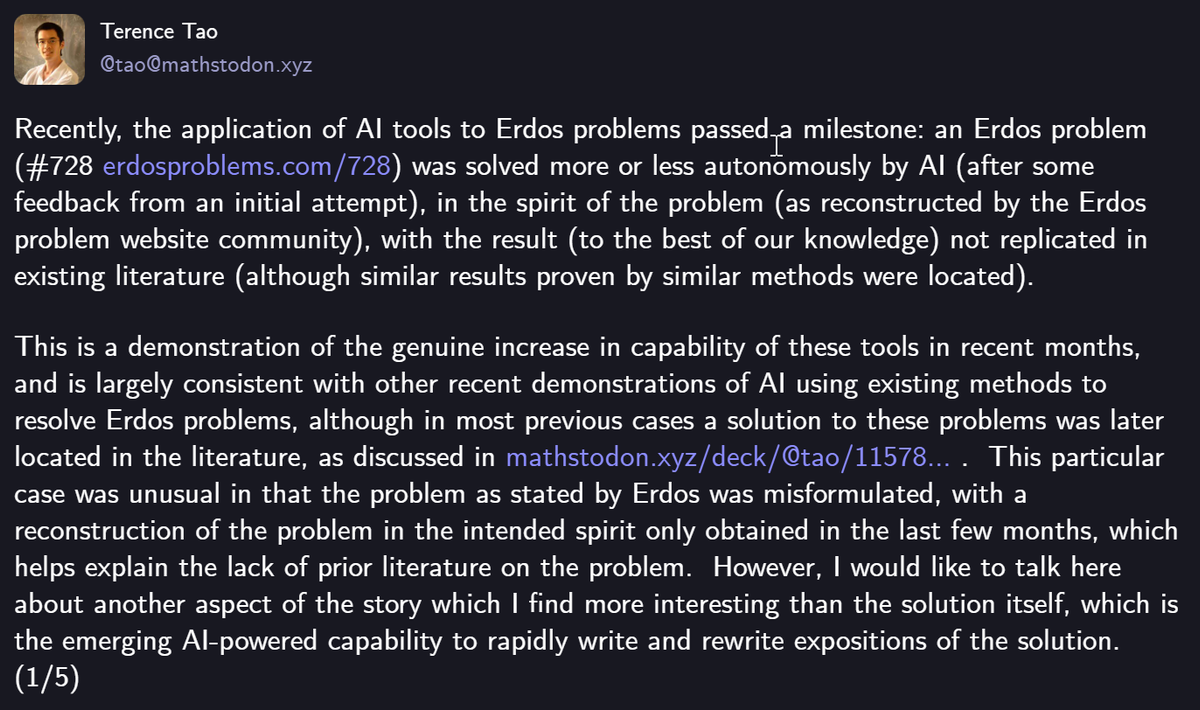

Additionally we are seeing examples of complex unsolved math problems being solved by LLM's.

Why This Matters

At Level 1, users are often disappointed as results vary wildly, leading many to dismiss AI as just hype. At Level 2, users see some of the potential of LLM technology. At Levels 3 and 4, users control model behavior, integrating real data and producing consistent high-quality results. At Level 5, people are orchestrating systems of work across complex operations. Same technology. Different methods. Different outcomes.

This unfortunate reality explains why some insist that AI is “slop” while others quietly build useful, high-impact niche systems. It’s like buying a new CNC machine tool and expecting it to produce perfect parts without setup or training. It just does not happen. Those very few general users at Levels 3–5 have invested in learning, experimentation, and iteration — and discovered what’s possible beyond surface interaction. It is still a software developer's realm and consequently it is also the difference between frustration and capability, between waste and value.

Fortunately this barrier between Level 2 and Level 3 is shrinking. Tools like Claude Cowork, Cursor, and many other AI-assisted development environments now let non-programmers access advanced settings and build simple applications through natural language. You describe what you want, and the AI writes the code. The learning curve still exists, but it's no longer a wall.

Reflections & Five Lessons Learned

I am in the camp that thinks the narrow AI tools (large language models) are overhyped in the short term but probably undervalued in the long term. The economics don't make financial sense yet. However the technology will be as impactful as the internet, websites, and Google Search were decades ago. I personally don’t think there is an impending overnight elimination of 40% of white-collar jobs as hyped in many news articles. Those claims seem to come from those who profit from selling the services of the models. I fully expect the models to get stronger, the interfaces to get easier, and the barriers to learning to get lower. The models will work their way into all language-related tasks over time and “augment” our abilities in profound ways. Humans + AI > Problems.

Things are going to change as technology matures, but I think these five pieces of advice will hold up in the long term.

1. Investment in Learning Pays Dividends

Real value requires real learning. Like any language, a phrasebook gets you tourist results, grammar and practice lead to fluency. I’m not a software engineer — I built car engines at Toyota, not web apps. Yet I built a 30,000-line web app with secure authentication, databases, and APIs in a couple of months. I learned by asking AI to be my coach. The models themselves can teach you. But you must put in the time. New beginner friendly tools like Claude Cowork are emerging as well.

2. Start Small, Not Large

Don’t always begin with an enterprise-AI-strategy-level problem. Pick one narrow, real problem that annoys you and build something small with AI to solve it. When getting started, you will learn more by doing small projects than large ones. I started by creating a simple one-page drag-and-drop analysis tool, then a 5-Why Root Cause Coach to help people strengthen their thinking. Lean teaches us to start with value, not technology. One problem, one tool, one experiment.

3. Apply Lean Thinking to AI Work

The AI world is going to eventually wrestle with the problem that language alone does not equal value-added work. More computing power isn’t always the answer. Smarter, simpler design often is. Start with the problem, not the tool. Ask:

• What value am I creating?

• What problem am I solving?

•Am I reducing waste or creating digital clutter?

Purpose before technology. Value before features. Go to the gemba — check with end users. Ensure what you build adds value, not complexity.

4. You’ll Need Help — But Less Than You Think

To reach Levels 3–4 of my framework, guidance helps. But you may need less outside support than you imagine. I learned roughly 90% of what I needed by using AI itself as my instructor — defining problems, diagnosing errors, and iterating solutions. I think the same is going to happen with a lot of skills at work. AI will augment our tasks but not fully replace them. That’s amplification in action: AI making people more capable, not replacing them.

5. Plan to Rebuild Everything Multiple Times — PDCA

When it comes to learning and projects involving AI, kaizen is going to be your ally. All of your first versions will be rough. Accept that fact and plan for it. Use PDCA — Plan, Do, Check, Act. Every cycle reveals something new. Most of my current tools are on their sixth or seventh or eighth iteration. Each rebuild taught critical lessons. Expect learning, not perfection, and your journey will be smoother.

Augmentation, Not Replacement

Two years ago, I was a skeptic at Level 1 and not optimistic about AI’s potential in the real world. In hindsight, I was a bit like the poor golfer blaming my clubs for my poor results. Over time I built my way up the levels to get closer to shooting par and, overall, more optimistic about the potential of LLMs in lean and work in general. I think that AI can be a powerful lever for democratizing knowledge while also creating certain products easier, faster, and better.

The five levels outlined here form a roadmap: where most people are stuck (Level 1), where improvement begins (Level 2), and where consistent value creation emerges (Levels 3, 4 and 5). You don’t have to reach Level 5 to benefit. Simply moving from Level 1 to Level 2 can improve your results. But understanding the landscape helps you see what’s possible. We’ve got some knowledge development projects underway at LEI that we hope to share at the 2026 Lean Summit in Houston.

Just as lean teaches that process matters as much as tools, AI reminds us that skill and method matter as much as technology.

Humans + AI > Problems.

The tools are ready. The question is: are we ready to learn to use them well?

Start climbing. Start small. Start today.

Update (January 2026):

This article was originally written in Fall 2025 and has been lightly updated to reflect newer developments in AI collaboration tools and capabilities that emerged in late 2025 and early 2026 (e.g., agentic workflows, collaborative AI systems, and recent research results). The core framework and conclusions remain unchanged.

About Art Smalley

Art Smalley is a leadership and Lean management expert with nearly 40 years of experience in operations and continuous improvement. He worked at Toyota and McKinsey & Company. He is a senior advisor to the Lean Enterprise Institute, author of four books, and has helped organizations worldwide implement sustainable improvement practices.

Related Articles

leanai

Humans Are End-to-End, LLMs Are Middle-to-Middle

Humans perceive and verify end-to-end, while LLMs reason middle-to-middle. How combining both creates a powerful two-layer PDCA loop for learning together.

leanai

Lean AI: Navigating Hype and Reality in the Age of Artificial Intelligence

The signals surrounding AI couldn't be more confusing — warnings of massive unemployment, glitzy robot demos, yet quiet transformation happening in software and consulting. The key lies in understanding Big AI versus Narrow AI, and partnering AI with lean rather than treating it as an all-encompassing solution.

aitechnology

Technology x Behavior x Management = Impact: Lessons from 30 Years of Lean for the Age of AI

Drawing from 30 years of Lean implementation, this framework shows why successful AI adoption requires three elements working together: Technology, Behavior, and Management systems. When any element is zero, impact is zero. Learn how to multiply results instead of adding them.