leanaileadership-management

Lean AI: Navigating Hype and Reality in the Age of Artificial Intelligence

Lean AI: Navigating Hype and Reality in the Age of Artificial Intelligence

After four decades watching automation promises in manufacturing, I've learned to separate the real breakthroughs from the demos. But the signals surrounding AI and its use by lean organizations couldn't be more confusing.

Geoffrey Hinton, the "godfather of artificial intelligence," warns that AI will create "massive unemployment and soaring profits."[1] Spending on data centers in 2025 is estimated to be $400 billion, with revenue of only $15-20 billion and $40 billion in annual depreciation costs.[2] Meanwhile, Tesla showcases $200,000-$500,000 humanoid robots at glitzy events — robots that, it turns out, require human operators to pour drinks and play rock-paper-scissors.[3]

Yet in quieter corners of the economy, real transformation is happening. Software developers are watching AI tools reshape frontend coding and lowering the barrier to entry for beginners. Stanford computer science professors are returning to handwritten exams because their students can no longer distinguish their own knowledge from AI assistance.[4] McKinsey & Co. has deployed 12,000 AI agents and reduced its workforce from 45,000 to 40,000 people while generating 40% of its revenue from tech advisory work. [5]

So what's actually happening, and what does this mean for the lean community? The answer lies in understanding a crucial distinction that cuts through the confusion — the difference between Big AI and Narrow AI — and then leveraging AI as partner with lean rather than an all-encompassing solution.

Big AI vs. Narrow AI: The Framework We Need

Big AI represents the convergence of five technological pillars that have been developing largely in relative isolation for decades: robotics, vision systems, expert systems, natural language processing (including today's large language models), and machine learning/predictive analytics. When these five pillars finally integrate — when robots can see, think, act, communicate, and learn together — we'll have something approaching the transformative AI that dominates headlines.

This convergence is real, and the long-term hype is probably justified. But I also think that it's many years away, not months.

I was heavily involved with automated robots, machine tools, automated guided vehicles, and automated assembly devices using precision measurement in Toyota engine plants more than 30 years ago. That technology has for decades continued to improve. And as soon as there is an affordable robot that can climb a ladder to put up my Christmas tree lights or replace a ceiling fan, I am all in. However, the current “demo” Tesla robots and the infrastructure economics that don't add up are symptoms of companies trying to force rapid Big AI timelines that don't match technological reality.

Narrow AI, by contrast, is disrupting specific domains right now. Generative AI tools are genuinely transforming software development and other fields. They're changing how consultants research and write, how legal research is conducted, how professors design assessments, and how knowledge workers access expertise to write better reports. It will affect the way we teach and learn in the coming years as well. My kids study AP Physics in high school using a combination of homework, Chat GPT with prompt engineering, and Khan Academy videos. This isn't the robot butler or the full self-driving car, but it's a real disruption happening today in knowledge work.

I think the confusion in AI discourse comes from mixing these distinctly different timelines and expectations. Narrow AI capabilities are impressively advancing but generally still domain-specific. Big AI potential and especially convergence across domains is even more transformative but still distant. Both situations are true simultaneously.

Where Narrow AI Is Actually Working Now

Narrow AI and specifically large language models are what mainly drive the hype cycle and inflated claims of today. The rapid improvement in this narrower domain gives the impression we are on the edge of total AI convergence in all areas. But, in my opinion, we are not.

The pattern in current Narrow AI disruptions follows a clear complexity gradient. For example, frontend web development, with its more established patterns and extensive training data, has been significantly transformed by AI coding assistants. Backend development, with its complex business logic and security considerations, has seen less dramatic change (and also some spectacular security failings in AI code developed by inexperienced developers).

The same is true in other professions. Generative AI can make quick inroads at the lower end of the difficulty spectrum and in certain cognitive tasks. Everyone has probably had their own version of a ChatGPT moment. Mine was using the models to review problem-solving reports and spot root-cause errors in logic for complex cases. Technology is simply following the path of least resistance and will continue to evolve and improve. In consulting, AI agents handle document summarization, basic research, and presentation formatting — not strategic thinking — freeing consultants (not eliminating them) for higher-level synthesis, client relationship management, and implementation support. In academia, a switch from take-home exams to handwritten, proctored tests is occurring because students and teaching assistants couldn't reliably distinguish student knowledge from AI assistance.

These examples share a common thread: AI is changing the nature of knowledge work, not eliminating it. The Narrow AI disruption is real but requires human judgment about where, when, and how to apply it effectively. However, it will have a major societal impact that we are only beginning to grasp in terms of its effect. This feels a lot like the early internet all over again. In 1995, we thought the web was just for email and basic information sharing. Yahoo was the dominate search engine. YouTube was known as a platform for cat videos and amateur clips — nobody predicted it would become the world's largest educational resource and transform entire industries from entertainment to journalism.

The Choice that Shapes Everything

Here's why this moment matters for the lean community: the patterns we establish now with Narrow AI will also shape how Big AI develops. We're at a fork in the road between two fundamentally different approaches.

The first path — extraction — follows what Geoffrey Hinton calls the capitalist default: "AI - Humans = Profit." Rich people use AI to replace workers, creating massive unemployment while concentrating wealth. We can see this model in action when companies use AI primarily to reduce headcount rather than improve value delivery. It's the consulting firm that eliminates 5,000 people and pockets the savings without considering the lost institutional knowledge. It's the manufacturer that opts to use AI for surveillance and control rather than problem-solving support.

The second path — amplification — embodies lean thinking: "Humans + AI > Problems." Here, AI amplifies human capability rather than replacing human dignity. A maintenance technician uses predictive analytics to prevent failures that would have been impossible to predict through experience alone. A continuous improvement team uses AI to analyze customer feedback patterns, spotting root causes in days rather than months. Managers sharpen their hoshin plans with AI catchball and real colleague feedback as well. A shopfloor worker gets instant access to expert troubleshooting guidance, democratizing knowledge that was previously bottlenecked. The cycle for learning gets faster, with higher quality repetitions, and the possibilities are endless.

The difference isn't just philosophical — it's practical. The amplification approach leverages both AI's pattern recognition strengths and human judgment, context understanding, and creative problem solving. The extraction approach treats humans as expensive hardware to be optimized away, missing the irreplaceable value of human insight, relationships, and adaptability.

Three Lean AI Principles

For lean practitioners navigating this landscape, three principles can guide decision-making in the application of Narrow AI today and Big AI much farther down the road:

Start with value, not technology: The first question isn't "How can we use AI?" but "Where are we failing to deliver value to customers?" If implementing AI increases value for customers and reduces waste in processes, it's worth pursuing. If it's technology for technology's sake, it's muda. This principle naturally leads to focused applications where AI genuinely improves the quality of outcomes rather than impressive but pointless demonstrations.

Respect for people with AI amplification: The goal for what we term Lean AI should be making people more effective problem solvers, managers, or coaches — not eliminating people. AI should democratize access to expertise, speed up analysis, and free humans for higher-value work like coaching, relationship building, and creative problem solving. We should strive to make people 10X more powerful when it comes to improvement. When evaluating any AI application, ask: “Does this make our people more capable, or are we trying to make them less necessary?”

Go see, ask why, augment with AI: Gemba remains essential. AI can surface patterns in data and suggest root causes, but it can't replace direct observation and human questioning. However, AI can dramatically enhance what you learn from going and seeing. It can help analyze what you observe, check your thinking against broader patterns, and provide access to expert knowledge that wasn't previously available. The combination of human observation and AI analysis is more powerful than either alone.

Evaluating AI against Lean Principles

In the next several years, I suspect the most productive AI applications will likely be in knowledge work. Large language models are best with text and to a lesser extent charts and images. This will enable improvements to be made in tasks that were difficult to enhance in the past. The flow system for knowledge work suddenly has a very viable path for improvement. AI-enhanced mapping and standardization of knowledge work that was invisible in the past and harder to analyze suddenly becomes easier. Tools like Zapier, N8N, or other workflow automation tools with low- or no-code interfaces will transform knowledge related work. AI-enhanced learning when used correctly can help improve worker skills. These applications align with lean principles because they reduce waste (faster analysis, better documentation) while respecting people and improving their capabilities (augmenting rather than replacing expertise).

When evaluating AI opportunities, apply the three principles as filters. Does this increase customer value? Does this amplify human capability? Does this enhance our ability to see and understand problems? If the answer is “yes” to all three, it's likely a good Lean AI application.

Watch for red flags: AI implementations focused primarily on headcount reduction, surveillance applications that increase fear rather than capability, or technology deployments that deskill workers rather than upskill them. These suggest the extraction model rather than the amplification model.

The Path Forward

The lean community has a unique opportunity and responsibility at this moment. With decades of experience balancing technology adoption with respect for people, lean practitioners understand something that pure technologists often miss: sustainable improvement comes from amplifying human capability, not replacing it.

The Narrow AI disruptions happening now are a preview of bigger changes coming as the five pillars of AI gradually converge. The patterns we establish today — amplification vs. extraction, value focus vs. technology focus, human-AI collaboration vs. human replacement — will shape how that convergence unfolds.

We don't need to choose between embracing AI uncritically or rejecting it entirely. We need to apply Lean AI thinking: start with value, respect people, and use these powerful tools to solve real problems more effectively. The choice we make will determine whether AI becomes a force for human flourishing or just another way to concentrate wealth and power.

The technology is neither inherently good nor inherently bad. It's inherently powerful. What matters is how we choose to use it.

[1]Jason Ma, "'Godfather of AI' says the technology will create massive unemployment and send profits soaring — 'that is the capitalist system'," Fortune, Sept. 6, 2025.

[2] “Global Crossing Is Reborn,” Praetorian Capital, Aug. 20, 2025.

[3]Rebecca Bellan, “Tesla Optimus bots were controlled by humans during the ‘We, Robot’ event,” TechCrunch, Oct. 14, 2024.

[4] Nick Lichtenberg, “This Stanford computer science professor went to written exams 2 years ago because of AI. He says his students insisted on it,” Fortune, Sept. 7, 2025.

[5]Frank Landymore, "McKinsey Terrified as It Realizes AI Can Do Its Job Perfectly," Futurism, Aug. 15, 2025.

About Art Smalley

Art Smalley is a leadership and Lean management expert with nearly 40 years of experience in operations and continuous improvement. He worked at Toyota and McKinsey & Company. He is a senior advisor to the Lean Enterprise Institute, author of four books, and has helped organizations worldwide implement sustainable improvement practices.

Related Articles

leantoyota

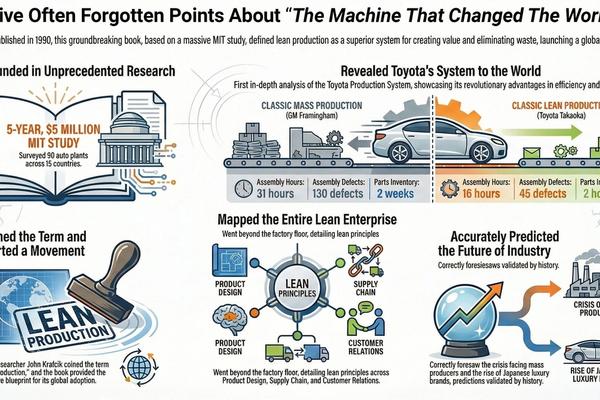

Reflecting On Machine That Changed The World - 35 Years Later

The Machine That Changed the World remains, 35 years later, the most comprehensive and empirically grounded explanation of Toyota as a complete enterprise system—not a collection of factory tools. Built on unmatched MIT research, the book quantified a structural performance gap between lean and mass production across product development, manufacturing, supply chains, and management, launching the Lean movement and defining its core vocabulary. Despite decades of Lean activity since, no later work has equaled its enterprise-wide scope, comparative rigor, or lasting explanatory power

problem-solvingleadership-management

The Coffee House Delusion - Why "Liquid Networks" Are Overrated

For years we’ve been told innovation comes from “creative collisions” — open offices, hallway chats, coffeehouse serendipity. But the evidence suggests that’s mostly a myth. Real breakthroughs are usually born in deep solitude, refined through structured review, and only then spread through networks.

leantoyota

Which Supermarket: Reviewing the Origins of Toyota's Pull System

An amusing internet tale about Toyota often goes something like this: the company’s legendary pull system sprang from Taiichi Ohno's epiphany during a visit to a Memphis-based supermarket chain called Piggly Wiggly. According to the legend, Ohno visited the store during a 1956 U.S. visit and was amazed by the way shelves were instantly refilled as customers shopped. And the thus the concept for his replenishment “supermarket” style of production was born. Despite the fact that no one can seem to locate a primary source for this story, it has been widely repeated for decades on internet blogs, LinkedIn, Wikipedia, and even by major news outlets like Reuters.